Annelies Kusters, Robert Adam, Marion Fletcher and Gary Quinn

In the “great confinement” due to the COVID-pandemic, deaf people’s participation when working with interpreters in workplace settings is compromised in a number of ways. Meetings are now held online by default, with people using audio and or video connections on online platforms such as Microsoft Teams. Meeting online may be a frequent or even standard practice after lockdowns are relaxed, due to new “waves” of the pandemic leading to further lockdowns, or simply because it becomes a “new normal” when people get used to it.

In this blog we discuss the practice of online meetings for deaf staff who work with interpreters in universities; the use of different platforms (Teams and Zoom) for these meetings; and how institutional policies surrounding the use of such platforms can lead to the exclusion of deaf staff. Our reason for honing in on Teams and Zoom is because of how universities contract out provision of platforms: Teams is widely used by universities for internal communication such as themed message boards, chats, sharing of files, and … meetings.

Our blog centres around a case study: Heriot-Watt University. At Heriot-Watt University, what we call “the BSL team” contains three deaf permanent staff (all three are co-authors of this blog), two deaf postdocs, five deaf PhD students and three deaf honorary scholars, as well as a number of hearing signers. We use Zoom for meetings in the BSL team, and below we explain the reasons for doing so. The three deaf permanent staff also have to participate in department and school meetings and other meetings such as meetings on research strategy, in addition to training (which we have to undertake as part of our professional development). For these meetings and trainings we work with the three in-house interpreters (one of whom is co-author of this blog). These meetings and trainings are curently organised on Microsoft Teams.

Challenges in online meetings when working with sign language interpreters

For online meetings, rather than the interpreter sitting or standing in a well-considered location (e.g. next to a speaker), the interpreters and the participants are now all on a screen. For deaf staff, this means that interpreters are now accessed 2D rather than 3D while sign language is three-dimensional; and via an internet connection that may be unreliable. Accessing the interpreter 2D, and on top of that often via a non-optimal internet connection, makes it even more challenging. Interpreted language, as we know, is generally more difficult to comprehend than “natural” language production. Also, many (non-deaf) people have found that online meetings are more tiring than meetings in real life. The combination of meeting online and working with interpreters means that it takes even more attention and energy to follow an online meeting. Looking at signing over meeting platforms, especially interpreted signing, deaf professionals quickly become used to having to “fill in the gaps” where one or more signs are missing when the connection momentarily freezes; much like the practice of lipreading! We also need to adapt our signing: when we sign to an interpreter in online meetings, it happens we need to think even more about how we construct our message, signing slower and more to the point so that the interpretation process is not delayed too much. Sometimes it helps to use the chat function on the meeting platform to clarify certain terms (e.g. fingerspelled terms).

When two interpreters are co-working, it can be difficult for them to shift between interpreters in a way that doesn’t happen in face-to-face settings. The issue of one interpreter alerting the other that it’s time to swap is tricky in terms of finding the most suitable and least distractive way of doing so (e.g. using private chat, waving, …). The same is true when the co-worker intervenes to repair an utterance that the signing interpreter may have missed, which happens more frequently now due to technical problems with the audio feed. These sudden swaps and repairs also can be jarring for deaf people who watch the interpreters.

MS Teams – features/interface/opportunities/challenges

In Microsoft Teams meetings in our department, we have to “pin” the interpreters, and currently a maximum of four videos can be pinned at the same time (in the next version of Teams this will be nine). In recent meetings, it has sometimes been the case that deaf participants could not pin the interpreter, meaning that they couldn’t see the interpreter throughout. When deaf participants have been able to pin the interpreter, the pinning has not worked properly and the pinned person repeatedly disappeared from the screen during the meeting. If two interpreters are working and three deaf colleagues are attending a meeting, they can’t see the interpreters, the presenter and each other at the same time (since that’s more than four people). So if one of them asks a question or makes a comment, the other deaf participants can’t see it. Teams at the moment offers very little control and we have all felt excluded as a result, as it is not suitable for signing or for interpreting.

Even when the pinning works, there is another challenge. When we watch an interpreter in real life meetings and in presentations and teaching settings, we do not just watch the interpreter. We also watch the hearing non-signers: we look at the mouth movements, facial expressions and body language of the person who is speaking and the responses of other persons in the room. In large meetings on Microsoft Teams, when tens or hundreds of colleagues participate at the same time, participants often switch off their video and audio and only switch on one or both of them when they want to comment. In other words, people may not see and hear each other until they want to say something, and even then, they may only hear the speaker. When we cannot see the speaker, we can access the subtexts and atmosphere of messages only via the interpreters.

Zoom – features/interface/opportunities/challenges

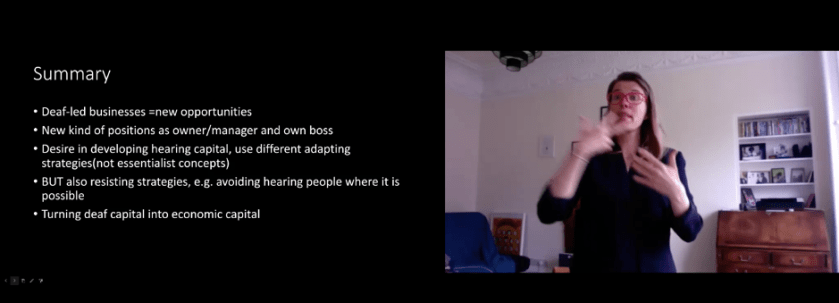

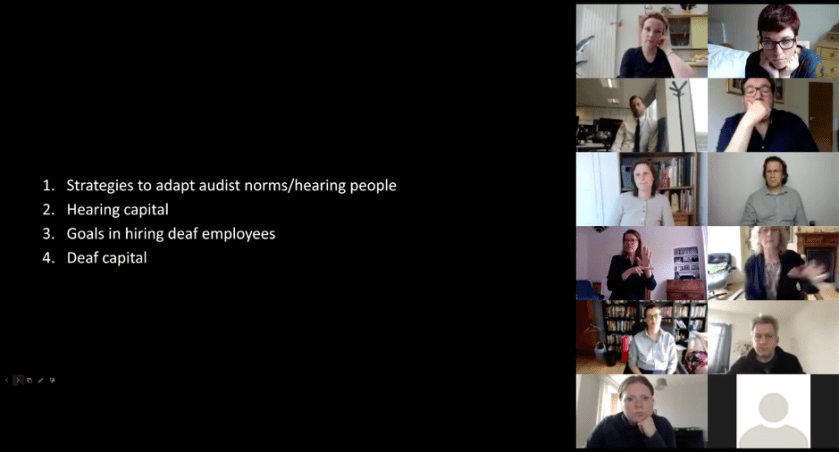

We have found another platform (Zoom) more suitable for our meetings at this moment. At Heriot-Watt university there are several kinds of meetings where only deaf and hearing signers participate: the fortnightly BSL team research meetings, fortnightly BSL team admin meetings and weekly MobileDeaf team meetings. In Zoom it is easy to see all the windows at the same time (see figure 1, which is a picture from a BSL research meeting). These meetings do not need to be interpreted since they allow for direct communication. We have learnt that it is important to pay attention to avoiding overlaps in signing (i.e. to do “clean” turn-taking), and that we can decide to pin a person who is giving a presentation (see figure 2) or to see all other participants when having a discussion when watching a PPT (or something else, such as a Word document or website) (see figure 3). Zoom can manage up to 25 people on one screen, and if there are more than 25 then it will split the participant screens across device screens and it is up to the individual to select which device screen to watch.

Figure 1: BSL research meeting in Zoom

Figure 2: pin a presenter in Zoom

Figure 3: watching a PPT and all participants in Zoom

On the request of deaf staff pointing out that Teams was not accessible, we have used Zoom for some research centre meetings with hearing non-signing colleagues. This worked well. In meetings on Teams when we don’t see the speakers it can be difficult to know who is speaking although the interpreter can help by flagging up clearly and repeatedly who is speaking. In Zoom it is very easy to see who is speaking or signing: we see them signing if they are a signer, and when people speak with voice their window edges will light up and their name will be on top of the participant list (after the Host). We can see the name and the face of the person – which is handy if we didn’t know the names of some colleagues yet, especially for Robert who just joined the team on 1st of April! We can see hearing colleagues’ facial expressions and get a better sense of the atmosphere of the meeting. Meetings on Zoom feels closer to “real life” meetings than meetings on Teams, more comfortable and more inclusive. Further, the video quality is much better on Zoom than on Teams, even when people have patchy connections. The use of Zoom has boomed not for nothing.

University policies about platforms, and barriers for deaf employees

While a number of our non-signing colleagues who ran small meetings (e.g. of research centres) agreed to hold these meetings on Zoom, most of our requests to migrate non-signers’ meetings to Zoom have met with structural barriers that are in place at our university. We have learnt that many other deaf academics in other universities in UK and Europe (and probably beyond) have the same problem: a number of universities insist on the use of Teams since it is an “internal system”, it is already included in the Microsoft package, and it is said to be more secure than other platforms.

It may be possible to work with Teams in a successful way but, so far, not in our university where meetings frequently involve sharing of PPT files, are organised by different chairs and with varying numbers of participants (up to hundreds of participants). Zoom is often said to be “not secure enough”. We know that Zoom is working on that (just like Teams is working on updates) – and there are platforms other than Zoom with similar functionality (e.g. Jitsi) that could be considered. Staff in some universities such as Gallaudet University and Norwegian universities are allowed and even encouraged to use Zoom and staff are provided with special licensed versions, so the security argument is not even uniform or valid everywhere. The point we want to make here is not about the technological shortcomings of any of the platforms per se, but that arguments such as “security” can cause or perpetuate exclusion.

Even if a system is not as secure as it should be, its use could be a temporary solution, and another solution could be to postpone meetings until they are accessible. Instead, participation of deaf people is jeopardized when meetings are held on platforms that are not suitable for signing. By just continuing to hold inaccessible meetings, the signal that is sent to deaf staff and their hearing colleagues is that deaf staff’s participation in the university community is of subordinate importance, and that it doesn’t really matter if we are there or not. At HWU a number of people are currently actively looking at solutions, but it took weeks of lobbying before we were really heard by our superiors about this issue. In this process, it has helped us to be clear and vocal, and to ask for support of colleagues who acted as allies, such as by sending joint letters or by not attending inaccessible meetings. In short, institutional policies about the use of platforms should not be treated as if they are set in stone, but are to be challenged if they lead to exclusion.

Annelies Kusters is Associate Professor in Sign Language and Intercultural Research at Heriot-Watt University. She currently leads a deaf research team focusing on intersectionality and translanguaging in the context of international deaf mobilities, called MobileDeaf. She’s on Twitter as @annelieskusters

Robert Adam commenced work as Assistant Professor at Heriot-Watt University, Department of Languages and Intercultural Studies, in the BSL section on 1st April 2020. He has research interests in sign language, interpreting, translation, minority languages and multilingualism and his PhD studies at UCL focussed on minority language contact between two sign languages. He is coordinator of the World Federation of the Deaf sign language and deaf studies expert group. He’s on Twitter as @rejadam

Marion Fletcher is a BSL/English interpreter (PGDip UCLan) who works as a full-time staff member at Heriot-Watt University, where she manages a small team of in-house interpreters. She achieved her Masters of Education from Stirling University in 2000 and has specialised in interpreting in academic settings.

Gary Quinn is an Assistant Professor at Heriot-Watt University, where he has taught BSL and Linguistics to MA interpreting students since 2006. Working in partnership with Edinburgh University, he is also involved with developing signs for science and mathematics for use by schools and universities. He achieved his Masters in Language Studies at Lancaster University and is now studying for a PhD in BSL linguistics. He’s on Twitter as @GaryAustinQuin2

Thank you for sharing! Useful to see your vlog, and highly recognizable points. I have similar challenges as you describe. Although I am alone as deaf PhD-candidate where I work I have challenges using Teams and other digital platforms for meetings and conversations. I look forward to see more vlogs!

Jitsi!!!!!!!!!!!!

Thank you. This is helpful to me as someone who will be co-hosting a meeting that will include a deaf person and their BSL interpreter. I have a basic awareness of how to make things accessible (and very basic BSL), but no actual lived experience as a deaf or hard of hearing person, nor as a BSL interpreter.

Now that Teams supports up to 49 simultaneous participants in Large Gallery view is it seen to be more suitable now? Pinning seems to work pretty for me – have you found it has improved?